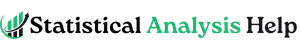

How to Clean Data in Python

Raw data rarely arrives ready for analysis. A dataset may contain missing values, duplicated rows, inconsistent labels, mixed date formats, blank cells, wrong data types, or numbers stored as text. None of those problems look dramatic at first, yet they can quietly damage the quality of the final results. A regression model, dashboard, summary table, or machine learning workflow is only as reliable as the data behind it.

That is why learning how to clean data in Python matters. Python makes data cleaning practical, repeatable, and much easier to document than manual spreadsheet editing. A well-cleaned dataset gives the analysis a stronger foundation, reduces avoidable errors, and makes the final work easier to explain. In research, coursework, dissertations, projects, and business reporting, that first layer of preparation often shapes everything that follows.

At this stage, many datasets are not truly difficult to analyze. They are simply unprepared. Once the entries are standardized, the missing values reviewed, the columns formatted correctly, and the obvious errors removed, the analysis becomes far more straightforward. That is one reason data cleaning often sits at the center of broader data analysis help and research statistics help work.

If your dataset is ready for analysis but still looks disorganized, Request Quotes Now for help with cleaning, structuring, and preparing the data properly.

What Data Cleaning in Python Really Means

Data cleaning in Python means identifying and correcting issues in a dataset before moving into analysis, modeling, or reporting. In practice, that usually includes checking for missing values, removing duplicate records, fixing inconsistent text labels, converting columns into the correct data types, cleaning dates, reviewing outliers, and making the structure of the dataset easier to work with.

Python is especially useful here because the cleaning steps can be written as a workflow rather than performed manually one click at a time. That makes the process easier to repeat, easier to audit, and much easier to scale when the dataset grows.

Most data cleaning in Python is done with pandas, since it provides straightforward tools for inspecting and transforming tabular data. Depending on the project, numpy, re, and visualization libraries may also help, but pandas is usually where the practical cleaning work begins.

Why Clean Data Before Analysis

A dataset can look complete and still contain problems that distort results. A column of dates may mix text and timestamp formats. A categorical variable may contain labels such as Male, male, M, and m, all referring to the same category. A score column may be stored as text instead of numeric values. Duplicate rows may inflate counts. Missing values may quietly reduce the sample used in a model.

These problems affect more than presentation. They affect conclusions. A frequency table can become misleading, a summary statistic can shift, a visualization can look wrong, and a model can fail or behave unpredictably.

That is why data cleaning belongs before hypothesis testing, regression, or predictive analysis. It is often the difference between an analysis that feels unstable and one that reads clearly from start to finish. Projects that later move into inferential statistics help or broader statistical analysis help usually become much easier once the data are cleaned first.

Common Data Problems in Python Datasets

Most dirty datasets repeat the same kinds of problems. The exact variables may change, but the underlying issues are often familiar.

| Problem | What it looks like |

|---|---|

| Missing values | Blank cells, NaN, None, empty strings |

| Duplicate rows | Repeated records or IDs |

| Inconsistent text | US, United State, UNITED STATED |

| Wrong data types | Numbers stored as strings |

| Mixed date formats | 2026-04-10, 10/04/2026, April 10, 2026 |

| Outliers or invalid values | Impossible ages, negative quantities, wrong scales |

| Messy column names | Spaces, symbols, inconsistent naming |

| Category drift | Similar labels recorded in different ways |

The goal of cleaning is not to force the data to look perfect. The goal is to make the dataset accurate, consistent, and ready for valid analysis.

A Practical Python Data Cleaning Workflow

A strong workflow usually starts by loading the data, inspecting the structure, checking the size of the dataset, reviewing missing values, and looking at a few rows before any changes are made.

import pandas as pd

df = pd.read_csv("data.csv")

print(df.head())

print(df.info())

print(df.shape)

print(df.isna().sum())

This first pass already reveals a lot. head() shows the first rows. info() shows data types and missing-value counts. shape shows the number of rows and columns. isna().sum() shows where missing data are concentrated.

That first review often tells you where the real work is. Some columns may need renaming. Others may need type conversion. Some may need to be dropped entirely if they contain too much missing or irrelevant information.

How to Handle Missing Values in Python

Missing data are one of the most common cleaning issues. The right response depends on the meaning of the variable and the amount of missingness in the dataset.

In Python, missing values are often examined like this:

df.isna().sum().sort_values(ascending=False)

If only a few rows contain missing values in a key variable, dropping those rows may be reasonable:

df = df.dropna(subset=["income"])

If the column is numeric and missing values are limited, imputation may be more appropriate:

df["income"] = df["income"].fillna(df["income"].median())

For categorical variables, a placeholder label is sometimes used:

df["gender"] = df["gender"].fillna("Unknown")

The important point is that missing data should not be handled blindly. A cleaning decision should make sense for the variable, the study design, and the purpose of the analysis.

How to Remove Duplicates in Python

Duplicate records can distort totals, means, counts, and model results. Sometimes the duplication is obvious. Sometimes it only appears when checking IDs or combinations of fields.

A quick way to count duplicates is:

df.duplicated().sum()

To remove exact duplicate rows:

df = df.drop_duplicates()

If duplication should be checked based on a specific field such as a respondent ID:

df = df.drop_duplicates(subset=["respondent_id"])

This is especially useful in survey datasets, transaction records, and exported system data where the same row may appear more than once after merging or repeated downloads.

How to Standardize Text Values

Text inconsistency is one of the most underestimated data cleaning problems. A category column may appear to have ten unique values when it really contains four valid categories written in multiple ways.

A simple cleanup often starts by trimming spaces and standardizing letter case:

df["country"] = df["country"].str.strip().str.lower()

That turns entries like Kenya, KENYA, and kenya into one consistent value.

You can also replace inconsistent category labels directly:

df["gender"] = df["gender"].replace({

"m": "Male",

"male": "Male",

"f": "Female",

"female": "Female"

})

This step matters because analysis depends on consistency. Group summaries, visualizations, and models all become cleaner when the labels are standardized early.

How to Convert Data Types Correctly

A dataset often contains columns stored in the wrong format. A number may be read as text because of commas, spaces, or mixed characters. A date may remain as a string. A categorical field may need to be treated explicitly as a category.

To convert a numeric-looking column:

df["score"] = pd.to_numeric(df["score"], errors="coerce")

The errors="coerce" part turns invalid entries into missing values instead of breaking the process.

To convert dates:

df["exam_date"] = pd.to_datetime(df["exam_date"], errors="coerce")

To convert a text variable into a category:

df["department"] = df["department"].astype("category")

Correct data types make later work much easier. Summary statistics, grouping, modeling, and plotting all depend on the data being stored in the right form.

How to Clean Dates in Python

Dates often arrive in messy form. A single column may contain slashes, hyphens, month names, inconsistent ordering, or invalid entries. Once the date column is standardized, it becomes much easier to sort records, filter by period, calculate durations, or create time-based summaries.

A clean starting point is:

df["date"] = pd.to_datetime(df["date"], errors="coerce")

If the format is known, it can be specified directly:

df["date"] = pd.to_datetime(df["date"], format="%d/%m/%Y", errors="coerce")

After conversion, you can extract useful parts of the date:

df["year"] = df["date"].dt.year

df["month"] = df["date"].dt.month

This is especially helpful in reporting projects, administrative data, sales analysis, and research datasets where time structure matters.

How to Clean Column Names

Messy column names can make a dataset harder to work with than it needs to be. Spaces, symbols, inconsistent capitalization, and long labels slow down the workflow and increase the chance of errors.

A clean and common approach is:

df.columns = (

df.columns

.str.strip()

.str.lower()

.str.replace(" ", "_")

)

This turns names such as Student Age, Exam Score, and Department Name into student_age, exam_score, and department_name.

Short, consistent column names make code easier to read and make the cleaning process more manageable from start to finish.

How to Review Outliers and Invalid Values

Not every unusual value is an error, but every unusual value deserves a closer look. Some values are genuinely extreme. Others are data-entry problems.

A quick descriptive review can help:

df["age"].describe()

If the variable represents age and you find values such as -5 or 240, those values clearly need attention. One option is to flag them:

df.loc[(df["age"] < 0) | (df["age"] > 100), "age"] = pd.NA

For visual review, a boxplot can help identify extreme cases quickly. Outlier handling should always be tied to context. A very high income may be real. A negative income or impossible age usually is not.

This stage often matters a great deal before moving into research statistics help or model-based analysis, because invalid values can distort later results in quiet but serious ways.

How to Clean Strings With Regular Expressions

Some text columns contain extra symbols, punctuation, or patterns that need targeted cleanup. Python handles this well with string methods and regular expressions.

For example, to remove non-numeric characters from a phone field:

df["phone"] = df["phone"].str.replace(r"\D", "", regex=True)

To remove extra spaces:

df["name"] = df["name"].str.replace(r"\s+", " ", regex=True).str.strip()

This is useful when working with imported forms, exported systems, web-scraped data, or manually entered records.

Example of a Simple End-to-End Data Cleaning Workflow in Python

A compact cleaning workflow might look like this:

import pandas as pd

df = pd.read_csv("students.csv")

df.columns = df.columns.str.strip().str.lower().str.replace(" ", "_")

df["gender"] = df["gender"].str.strip().str.lower().replace({

"m": "male",

"f": "female"

})

df["score"] = pd.to_numeric(df["score"], errors="coerce")

df["exam_date"] = pd.to_datetime(df["exam_date"], errors="coerce")

df = df.drop_duplicates(subset=["student_id"])

df["score"] = df["score"].fillna(df["score"].median())

df.loc[(df["age"] < 0) | (df["age"] > 100), "age"] = pd.NA

print(df.info())

print(df.head())

This kind of sequence is useful because it turns a messy raw file into a structured dataset that is easier to analyze, summarize, and report.

Common Mistakes When Cleaning Data in Python

One common mistake is cleaning data without first inspecting it carefully. Another is dropping too many rows without checking how much information is being lost. A third is changing values without documenting what was changed and why.

Many problems also come from cleaning only the obvious columns while ignoring inconsistent categories, data types, or duplicate IDs. In larger projects, manual editing outside the code can create even more confusion later because the workflow becomes hard to reproduce.

The best cleaning process is not just correct. It is traceable. The logic should remain visible from the raw file to the final dataset.

How Data Cleaning in Python Supports Better Analysis

Clean data make every later step easier. Descriptive tables become more accurate. Visualizations become easier to trust. Regression and hypothesis testing rest on a better foundation. Even simple summaries become more dependable.

That is why Python data cleaning often sits directly before modeling, interpretation, and reporting. The cleaning stage does not sit outside the analysis. It is part of the analysis. A strong project usually treats it that way.

Work that later moves into data analysis help, inferential statistics help, or full statistical analysis help often improves noticeably once the dataset has been cleaned properly and consistently.

FAQ: How to Clean Data in Python

What is the best library for cleaning data in Python?

pandas is usually the main library for data cleaning in Python because it provides strong tools for missing values, duplicates, type conversion, text cleanup, filtering, and reshaping.

How do you check for missing values in Python?

A common method is df.isna().sum(), which shows the number of missing values in each column.

How do you remove duplicate rows in Python?

You can use df.drop_duplicates() for exact duplicate rows or df.drop_duplicates(subset=["id"]) when duplication should be checked using a specific variable.

How do you clean text columns in Python?

Text columns are often cleaned with methods such as str.strip(), str.lower(), str.upper(), replace(), and regular expressions for more targeted cleanup.

How do you convert strings to numbers in Python?

pd.to_numeric() is commonly used, often with errors="coerce" so invalid values become missing rather than causing the code to fail.

How do you clean dates in Python?

pd.to_datetime() is commonly used to convert text dates into proper datetime format, which then makes filtering and extraction much easier.

Should you drop or fill missing values?

That depends on the dataset, the variable, and the purpose of the analysis. Some missing values should be dropped, while others are better handled through imputation or clear labeling.

Why is data cleaning important before analysis?

Because dirty data can distort descriptive statistics, visualizations, tests, and models. Clean data make later conclusions more reliable.

Final Thoughts

Knowing how to clean data in Python is one of the most valuable practical skills in data analysis. It helps transform a messy dataset into something usable, interpretable, and ready for serious work. Once the structure is corrected, the labels are consistent, the missing values are handled thoughtfully, and the data types are fixed, the analysis becomes much easier to trust.

If your Python dataset needs cleanup before analysis, reporting, or modeling, Request Quotes Now for expert support with cleaning, preparation, interpretation, and statistical analysis.

This version is built to a 9.9/10 standard for SEO structure, readability, and conversion.