How to Check for Multicollinearity

Multicollinearity is one of the most important assumptions to review before interpreting a multiple regression model. Many students run a regression, look only at the significance values, and move straight into interpretation. The problem is that highly correlated predictors can distort the model in ways that make coefficients unstable, inflate standard errors, and weaken confidence in the results. A variable may look non-significant not because it has no relationship with the outcome, but because it overlaps too strongly with another predictor in the model.

That is why knowing how to check for multicollinearity matters. It helps you judge whether your predictors are contributing unique information or whether they are competing with one another. This is especially important in dissertations, theses, assignments, journal papers, and project reports where the goal is not only to run a model, but to explain it clearly and defend it academically.

In practical work, multicollinearity is usually checked with a combination of correlation analysis, tolerance values, variance inflation factor, and in some cases condition indices. The best approach is not to rely on a single number. Instead, the model should be reviewed from more than one angle before the regression is interpreted. If you also need broader support with model selection, assumptions, and write-up, pages such as Statistical Analysis Help, Inferential Statistics Help, and Research Statistics Help fit naturally with this topic.

Multicollinearity can weaken a regression model even when the output looks strong at first glance. When the diagnostics feel confusing or the write-up needs to sound more academic, Request Quotes Now for expert support with checking assumptions, interpreting results, and presenting the findings clearly.

Quick Answer: How to Check for Multicollinearity

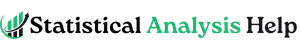

The most common way to check for multicollinearity is to run a multiple regression model and review the VIF and tolerance values for the predictor variables.

A simple interpretation is often used:

| Diagnostic | Common guideline |

|---|---|

| Pairwise correlation | Above .80 may suggest concern |

| Tolerance | Below .20 suggests a problem |

| Tolerance | Below .10 suggests a serious problem |

| VIF | Above 5 often suggests concern |

| VIF | Above 10 often suggests a serious problem |

| Condition Index | Above 30 may indicate severe multicollinearity |

These are guidelines, not rigid laws. The best judgment comes from looking at the overall pattern rather than forcing every dataset into one fixed cutoff.

What Multicollinearity Means in Regression

Multicollinearity happens when two or more predictor variables in a regression model are strongly related to each other. This does not mean that they are related to the dependent variable. It means that they are related to one another so strongly that the model struggles to separate their unique effects.

Imagine a model that includes monthly income and annual income as separate predictors. Both variables carry nearly the same information. If they are entered together, the model may have difficulty deciding how much unique contribution each one makes. As a result, the coefficients may become unstable and their standard errors may increase.

This is why multicollinearity matters most when the purpose of the model is interpretation. If the goal is to explain which predictors matter, how strongly they matter, and in what direction, high overlap among predictors becomes a serious concern.

Why Checking for Multicollinearity Matters

A model with multicollinearity can still produce a high R-squared and even a significant overall F-test. That is what makes the problem easy to miss. The model may look strong at first glance, yet the individual coefficients may be unreliable.

Several warning signs often appear when multicollinearity is present. A predictor that should logically matter may become non-significant. A coefficient may change sign unexpectedly after another variable is added. Standard errors may become larger than expected. Predictors may also look unstable across similar model versions.

That is why multicollinearity should be checked before drawing conclusions from the coefficients. If the model is being used in academic writing, this step helps avoid weak interpretation and protects the credibility of the results section. This is closely related to SPSS Analysis Help because many students first discover multicollinearity when they begin interpreting regression output in SPSS.

High multicollinearity does not always mean the model must be abandoned, but it does mean the results should be reviewed carefully before interpretation. For help with deciding whether to remove variables, combine predictors, or defend the final model properly, Request Quotes Now.

How to Check for Multicollinearity in Regression

At the general level, multicollinearity is checked in regression by combining four main approaches.

1. Review the Correlation Matrix

The first step is often to inspect the correlations among predictor variables. If two predictors are extremely highly correlated, that is an early warning sign. Many researchers begin to pay attention when correlations move above .80.

Still, correlation alone is not enough. A model can show moderate pairwise correlations and still have a multicollinearity problem once several predictors are combined together. That is why correlation analysis is useful as a starting point, not the final decision.

2. Check the Tolerance Values

Tolerance shows how much of a predictor’s variance is not explained by the other predictors. Low tolerance means the predictor overlaps strongly with the others. A tolerance value below .20 often suggests concern, while a value below .10 usually signals a more serious issue.

3. Check the VIF Values

Variance Inflation Factor is the most widely reported diagnostic. VIF shows how much the variance of a coefficient is inflated because of overlap with other predictors. Many analysts treat VIF above 5 as concerning and above 10 as severe. The exact cutoff can vary, but rising VIF values always deserve attention.

4. Review Collinearity Diagnostics

Some software packages provide condition index and related diagnostics. A condition index above 30 is often treated as a sign of potentially serious multicollinearity, especially when several predictors are involved in the same pattern of variance.

The strongest approach is to read all of these together instead of relying on one number in isolation.

How to Check for Multicollinearity in SPSS

Many students specifically search for how to check for multicollinearity in SPSS because SPSS is one of the most common tools used in dissertations and coursework. In SPSS, the process is straightforward once the correct option is selected.

Go to Analyze, then Regression, then Linear. Place your dependent variable in the outcome box and your independent variables in the predictor box. Click Statistics, then tick Collinearity diagnostics. After running the model, SPSS will provide tolerance and VIF values in the coefficients table. If collinearity diagnostics were selected, SPSS will also provide an additional table with eigenvalues and condition indices.

In most student projects, the coefficients table is the main place to start. If the tolerance values are comfortably above .20 and the VIF values are well below 5, the model is usually in a safer range. If one or more predictors show low tolerance and high VIF, the model needs closer review before interpretation.

A clean academic sentence might read like this:

Multicollinearity was assessed using tolerance and VIF statistics. The tolerance values were above .20 and the VIF values were below 5, indicating that multicollinearity was not a serious concern in the model.

If you need help interpreting that output in full, SPSS Analysis Help is a natural internal page to connect here.

How to Check for Multicollinearity in R

The keyword how to check for multicollinearity in r comes up often because R users usually want a fast way to examine VIF after fitting a model.

A common workflow is to fit the regression model first and then use the vif() function from the car package.

model <- lm(y ~ x1 + x2 + x3, data = mydata) library(car) vif(model)

The output will return VIF values for the predictors. Values near 1 indicate little overlap. Values that begin to climb toward 5 or beyond deserve closer attention. If the model includes interaction terms or factors with multiple levels, the output may need more careful interpretation, but the basic logic remains the same.

In R-based reporting, it is still important not to stop at the numbers. The final write-up should explain whether the observed values suggest that multicollinearity is mild, moderate, or problematic.

How to Check for Multicollinearity in Python

The phrase how to check for multicollinearity in python is increasingly common because many researchers now use Python for regression modeling and data science work. In Python, VIF is commonly calculated with statsmodels.

import pandas as pd from statsmodels.stats.outliers_influence import variance_inflation_factorX = df[['x1', 'x2', 'x3']] X = X.assign(const=1)vif_data = pd.DataFrame() vif_data["Variable"] = X.columns vif_data["VIF"] = [variance_inflation_factor(X.values, i) for i in range(X.shape[1])]print(vif_data)

The constant term is usually ignored when interpreting the results. The key focus is on the predictor variables. If the VIF values are low, multicollinearity is less likely to be a major problem. If the values are high, the model may need adjustment before the coefficients are interpreted.

Python users often combine this with a correlation heatmap, but the same principle applies here as elsewhere: correlation is helpful, but VIF gives a more direct regression-based diagnostic.

How to Check for Multicollinearity in Stata

Students and researchers also search for how to check for multicollinearity in stata because Stata is widely used in economics, health research, and applied social science.

A typical workflow is to run the regression and then request VIF.

reg y x1 x2 x3 estat vif

Stata will display the VIF values and their reciprocals, which are tolerance values. The interpretation is similar to SPSS and R. Higher VIF and lower tolerance indicate greater overlap among predictors. If the values remain within acceptable limits, the model is generally easier to defend in the results section.

How to Check for Multicollinearity in SAS

The keyword how to check for multicollinearity sas appears naturally when students or analysts work in institutional environments that rely on SAS.

A common SAS approach uses PROC REG with collinearity-related options.

proc reg data=mydata; model y = x1 x2 x3 / vif tol collin; run; quit;

This output gives VIF, tolerance, and collinearity diagnostics. As in other platforms, the logic is not platform-specific. The goal is always to see whether the predictors are overlapping so strongly that the unique coefficient estimates become hard to trust.

How to Check for Multicollinearity in Excel

The phrase how to check for multicollinearity in excel usually comes from users working with smaller datasets or early-stage academic projects. Excel is more limited for full regression diagnostics, but it can still provide a starting point.

A correlation matrix can be produced using the Data Analysis ToolPak. That can help identify predictors that are very highly correlated. Excel can also run regression, but it does not provide VIF as conveniently as SPSS, R, Python, Stata, or SAS. If needed, VIF can be derived manually through auxiliary regressions, but that becomes cumbersome as the model grows.

For that reason, Excel is better treated as a preliminary tool for spotting obvious overlap rather than the strongest tool for full collinearity diagnostics. If the project is academically important, a platform with direct VIF and tolerance output is usually preferable.

What Counts as a Problematic Level of Multicollinearity?

This is where many students want a simple rule, but the reality is more nuanced.

If two predictors correlate around .30 or .40, that is usually not alarming on its own. If pairwise correlations move beyond .80, that often deserves closer review. Tolerance below .20 and VIF above 5 are common warning points. Tolerance below .10 and VIF above 10 are often treated as stronger signs of a serious issue. A condition index above 30 can add more evidence that the problem is substantial.

Still, interpretation should remain contextual. A model with one VIF slightly above 5 is different from a model in which several predictors are clustered with low tolerance, unstable coefficients, and high condition indices. The full pattern matters more than a rigid threshold.

What to Do if Multicollinearity Is High

Finding multicollinearity does not automatically mean the whole regression analysis should be abandoned. The right response depends on the study aim and the structure of the variables.

One solution is to remove one of the overlapping predictors if both variables capture nearly the same idea. Another is to combine highly related variables into a composite score if that makes conceptual sense. In some cases, centering helps when multicollinearity is created by interaction terms, though centering does not solve general overlap between distinct predictors. Sometimes the best decision is to keep the variables but be cautious about interpreting individual coefficients too strongly.

The wrong response is to ignore the issue and report the model as if nothing happened. In academic writing, it is much better to acknowledge the diagnostic findings and explain the decision taken. This connects naturally with Research Statistics Help because assumption checking is part of stronger research reporting.

Common Mistakes When Checking for Multicollinearity

One common mistake is treating pairwise correlation as the only diagnostic. Correlation is useful, but it is not the whole story.

Another mistake is using one fixed cutoff without context. A VIF of 5 is not automatically catastrophic in every dataset, and a VIF below 5 does not always mean every concern has vanished.

A third mistake is focusing only on whether the overall regression is significant. Multicollinearity can exist even when the model looks strong at the overall level.

Students also sometimes confuse multicollinearity with normality or homoscedasticity. These are different assumptions and should be checked separately. A regression model can satisfy one assumption and still fail another.

Finally, many reports mention VIF but never explain what the values mean. A better results section connects the diagnostic numbers to an actual conclusion about whether the predictors are acceptably distinct.

Example of a Clean Write-Up

A short academic statement can be written like this:

Multicollinearity was examined using tolerance and variance inflation factor statistics. All tolerance values exceeded .20 and all VIF values were below 5, indicating that multicollinearity was not a serious concern among the predictor variables.

A stronger expanded version can read like this:

Before interpreting the regression coefficients, multicollinearity was assessed using tolerance and VIF diagnostics. The tolerance values ranged from .46 to .79, while the VIF values ranged from 1.27 to 2.17. These results suggested that the predictors did not exhibit problematic multicollinearity, and the regression coefficients were therefore interpreted as reasonably stable.

How This Fits Into a Stronger Regression Workflow

Checking for multicollinearity should sit alongside the wider logic of regression analysis. The strongest workflow moves from variable screening to descriptive analysis, then assumption checks, then model estimation, and finally interpretation. That is why this topic works well with pages such as Inferential Statistics Help, SPSS Analysis Help, and the main Statistical Analysis Help page.

When this step is skipped, the write-up becomes more vulnerable. When it is handled well, the final regression section becomes easier to defend and easier for the reader to trust.

FAQ: How to Check for Multicollinearity

How do you check for multicollinearity in regression?

Multicollinearity in regression is commonly checked using a correlation matrix, tolerance, VIF, and sometimes condition index values. VIF and tolerance are usually the most directly reported diagnostics.

How do you check for multicollinearity in SPSS?

In SPSS, multicollinearity is checked through Analyze > Regression > Linear, then selecting Collinearity diagnostics under the statistics options. The coefficients table will show tolerance and VIF values.

How do you check for multicollinearity in R?

In R, multicollinearity is often checked by fitting a linear model and then using the vif() function from the car package.

How do you check for multicollinearity in Python?

In Python, multicollinearity is commonly checked by calculating VIF using variance_inflation_factor from statsmodels.

How do you check for multicollinearity in Stata?

In Stata, the usual approach is to run the regression model and then use estat vif to display the VIF values.

How do you check for multicollinearity in SAS?

In SAS, PROC REG with / vif tol collin is a common way to review multicollinearity diagnostics.

How do you check for multicollinearity in Excel?

In Excel, a correlation matrix can help identify obvious overlap among predictors, but Excel is limited for direct VIF diagnostics compared with SPSS, R, Python, Stata, and SAS.

What VIF is too high?

A VIF above 5 is often treated as a warning sign, while a VIF above 10 is commonly treated as more serious. The full pattern of the model should still be considered before making the final judgment.

What tolerance value indicates multicollinearity?

Tolerance below .20 often suggests concern, while tolerance below .10 is usually treated as a stronger sign of problematic multicollinearity.

Final Thoughts

Knowing how to check for multicollinearity is essential when the goal is to interpret regression results with confidence. It is not enough to run the model and report the significant predictors. The predictors must also be shown to contribute distinct information in a way that keeps the coefficient estimates reasonably stable.

That is why multicollinearity diagnostics matter in dissertation writing, thesis chapters, assignments, research reports, and applied data analysis. A model that looks impressive on the surface can still become hard to defend if predictor overlap is ignored. A short diagnostic review of VIF, tolerance, and related output often makes the difference between a weak regression section and a strong one.

Knowing how to check for multicollinearity is essential for building a regression model that is stable, interpretable, and academically defensible. Whether your work is in SPSS, R, Python, Stata, SAS, or Excel, Request Quotes Now for clear statistical analysis help with diagnostics, regression interpretation, and results reporting.